ASTRA-sim: Enabling Software-Hardware Co-Design Exploration for Distributed Machine Learning Platforms

Update in Progress

As we prepare for the tutorial, this page will be updated periodically. Please stay tuned!

Overview

Date/Location

- Jun 27, 2026 (Saturday: full-day)

- Raleigh Convention Center Info

The ASTRA-sim tutorials educate the research community about the challenges in the emerging domain of distributed machine learning, demonstrate the capabilities of ASTRA-sim with examples and discuss ongoing development efforts.

NEW – In this tutorial for ISCA 2026, we will (1) introduce the latest features added to the ASTRA-sim framework, and (2) have invited presentations on the usage of ASTRA-sim in various use cases.

Description

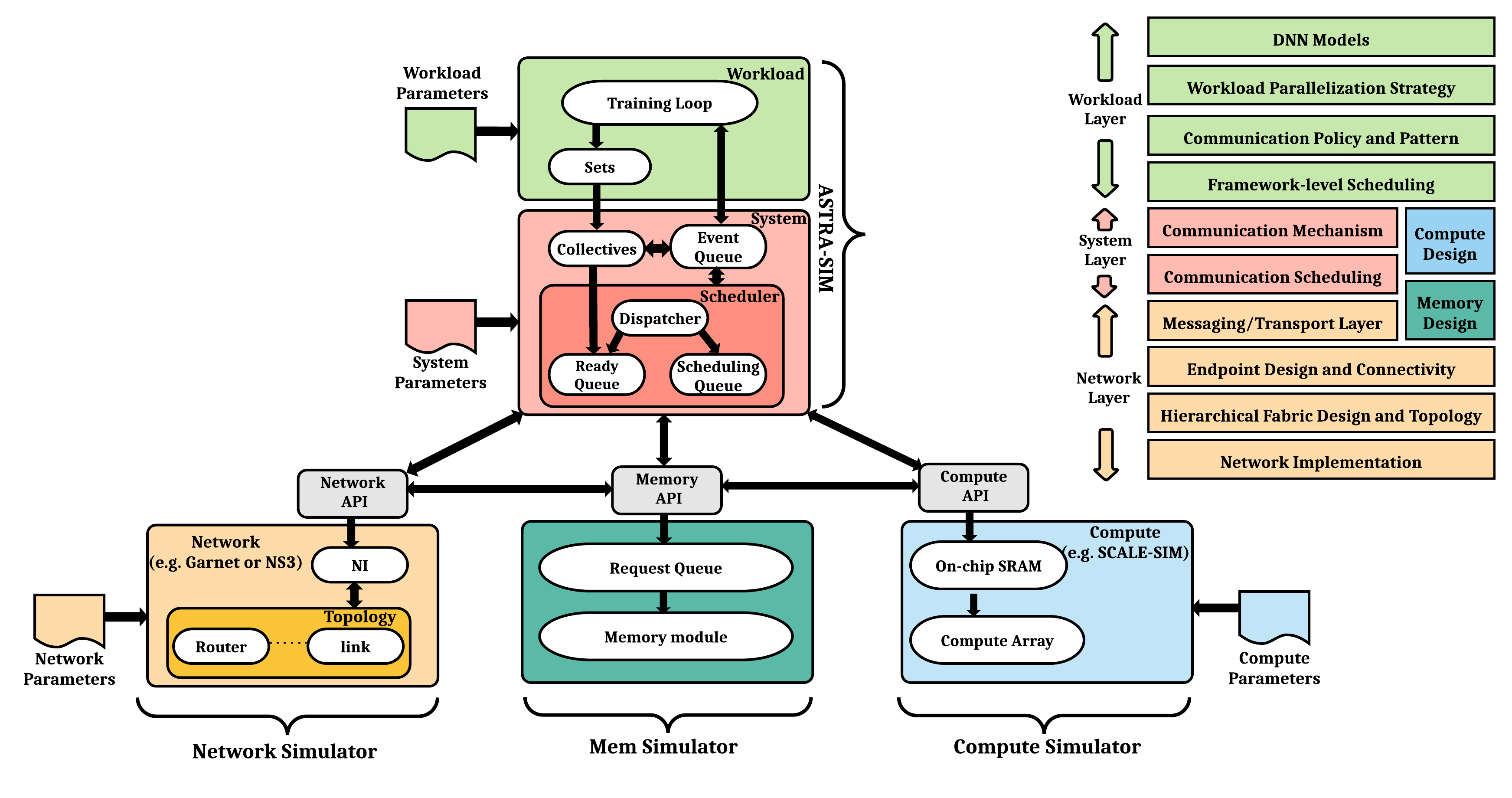

As Artificial Intelligence (AI) models are scaling at an unprecedented rate, Machine Learning (ML) execution heavily relies on Distributed ML over customized neural accelerator (e.g., GPU or TPU)-based High-Performance Computing (HPC) platforms connected via high-speed interconnects (e.g., NVLinks). Examples today include NVIDIA’s HGX, Google’s Cloud TPU, and Meta’s Research Supercluster. Distributed Deep Neural Network (DNN) execution involves a complex interplay between the DNN model architecture, parallelization strategy, scheduling strategy, collective communication algorithm, network topology, remote memory accesses, and the accelerator endpoint. As innovation in AI/ML models continues to grow at an accelerated rate, there is a need for a comprehensive methodology to understand and navigate this complex intertwined co-design space to (i) architect future platforms, (ii) develop novel parallelism schemes to support efficient training of future DNN models, and (iii) develop novel fabrics for AI systems. As an ongoing collaboration between Georgia Tech and several companies, we have been jointly developing (1) a comprehensive methodology to capture arbitrary distributed ML workloads, named Chakra Execution Trace and (ii) a detailed cycle-accurate distributed AI simulator called ASTRA-sim.

ASTRA-sim models the co-design space of distributed ML described above and schedules the compute-communication interactions over plug-and-play computation, network, and remote memory simulators. It enables a systematic study of bottleneck detection and futuristic system evaluation at the software and hardware levels for scaling distributed ML. ASTRA-sim leverages the Chakra format to describe arbitrary distributed ML workloads. It uses a Google TPU-like simulator as its computation model and provides a suite of network models (analytical network, Garnet, and ns-3) for the choice of simulation speed and fidelity.

Target Audience

Any researcher with the interest in full-stack, large-scale AI/ML simulation. Any researcher interested in the several usecases and adaptation of ASTRA-sim across industry and academia organizations.

Organizers

- William Won, Brad Beckmann, Tuan Ta (AMD)

- Tushar Krishna, Jinsun Yoo (Georgia Tech)

Schedule

Morning Session

| Time | Presenter | Topic | Resources |

|---|---|---|---|

| 8:00 - 8:20 | Tushar Krishna (GT) | Introduction: AI systems/distributed ML | |

| 8:20 - 8:50 | Brad Beckman (AMD) | Keynote | |

| 8:50 - 9:05 | William Won (AMD) | Workload Layer (Chakra) | |

| 9:05 - 10:00 | TBD (AMD) | System Layer (Custom Collectives, GPU model) | |

| 10:00 - 10:30 | [Coffee Break] | ||

| 10:30 - 10:55 | Jinsun Yoo (GT) | Network Layer (API, ns-3) | |

| 10:55 - 11:10 | Veerasenareddy ‘VSR’ Burru (Marvell) | Network Layer (HTSim) | |

| 11:10 - 11:40 | Harsh Sikhwal (Keysight) | InfraGraph | |

| 11:40 - 12:00 | Changhai Man (GT) | Demo |

Afternoon Session

Confirmed presenters in alphabetical order of org. List of order is subject to change.

| No. | Presenter | Topic |

|---|---|---|

| 1:30 - 1:50 | Ulf Hanebutte and Nikhil Bernard John Stephen (Marvell) | Enabling Memory Tiering in ASTRA sim with Extended Chakra Traces |

| 1:50 - 2:10 | Tanvir Khan (Columbia) | Modeling Energy Consumption using ASTRA-sim |

| 2:10 - 2:30 | Shawn Chen (CMU) | Quantifying Job Scheduling Performance in Multitenant ML Clusters using ASTRA-sim |

| 2:30 - 2:50 | Harsh Sikhwal (Keysight) | TBD |

| 2:50 - 3:10 | Debjyoti Bhattacharjee (Imec)) | TBD |

| 3:10 - 3:30 | 6. | TBD |

| 3:30 - 4:00 | [Coffee Break] | |

| 4:00 - 4:20 | 7. | TBD |

| 4:20 - 4:40 | 8. | TBD |

| 4:40 - 5:00 | 9. | TBD |